How AI Impacts PHI Risk Management

Post Summary

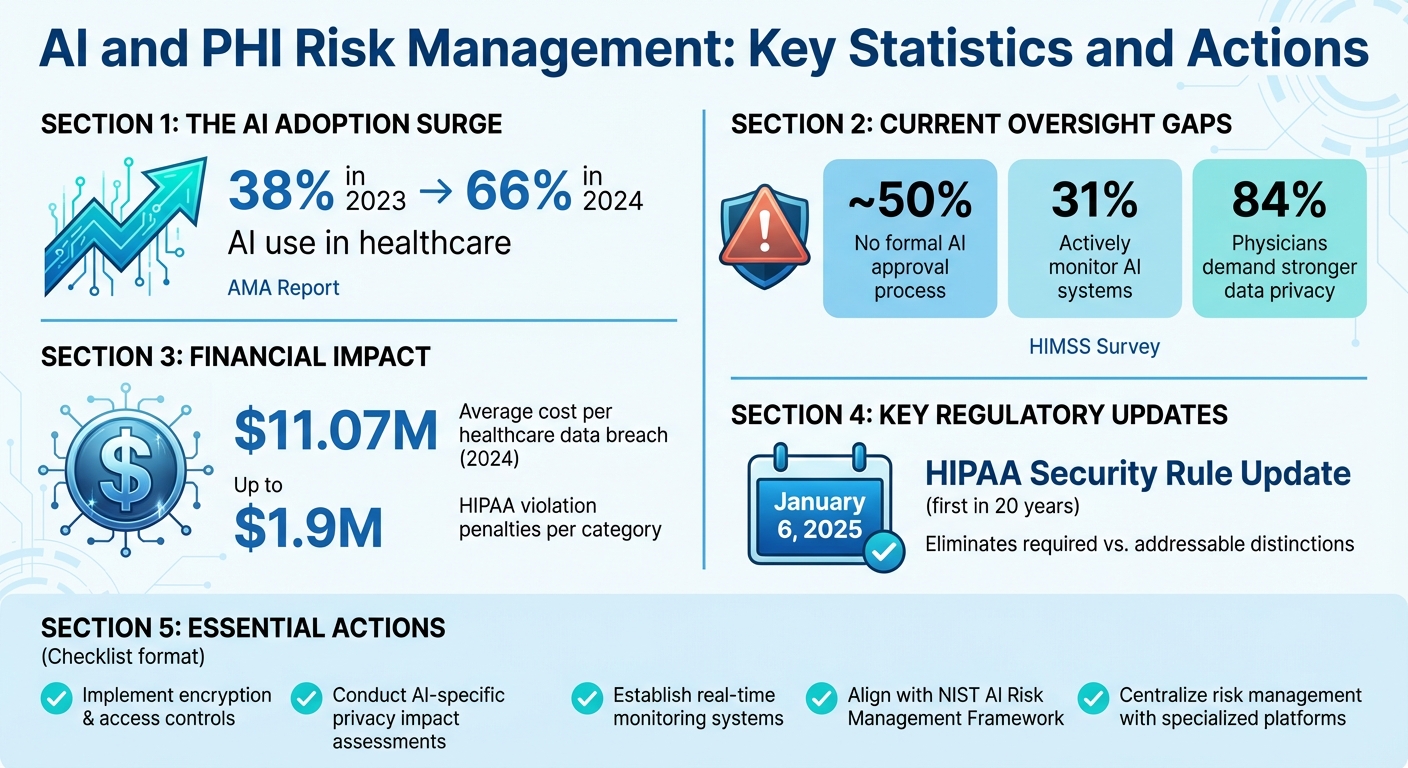

Artificial Intelligence (AI) is reshaping healthcare but also increasing risks to Protected Health Information (PHI). Here's what you need to know:

- AI systems rely on large datasets, including PHI, which heightens exposure risks in data pipelines, storage, and workflows.

- A 2025 HIPAA Security Rule update now enforces stricter encryption, risk management, and monitoring standards for AI systems.

- Common risks include data breaches, AI-driven cyberattacks, and compliance challenges due to "black box" AI models and algorithmic bias.

- Most healthcare organizations lack adequate AI oversight - nearly half have no approval process, and only 31% monitor AI systems.

- The NIST AI Risk Management Framework offers tools to address AI-specific risks, emphasizing security, explainability, and privacy.

Key Actions for Risk Reduction:

- Implement strong encryption, access controls, and real-time monitoring for AI systems.

- Conduct AI-specific privacy impact assessments and ensure compliance with updated HIPAA standards.

- Use platforms like Censinet RiskOps™ to centralize risk management, automate vendor assessments, and track compliance metrics.

Healthcare providers must act now to secure PHI in AI workflows, balancing innovation with stronger protections.

AI and PHI Risk Management: Key Statistics and Actions for Healthcare Organizations

AI explained: AI and recent HHS activity with HIPAA considerations

sbb-itb-535baee

How AI Creates New Risks for PHI Security

AI is reshaping the security landscape for Protected Health Information (PHI). Unlike traditional systems that handle PHI in predictable ways, AI processes data from diverse sources like electronic health records (EHRs), imaging systems, and patient portals. This creates a broader attack surface, spanning training, embeddings, storage, and logs. If these data flows aren’t properly segmented or encrypted, the chances of exposure increase significantly[3][5].

Larger Data Exposure and Breach Risks

AI’s reliance on vast amounts of PHI introduces new vulnerabilities. Data flow mapping often reveals weak points where electronic PHI (ePHI) may pass through unencrypted channels or unsecured logs. Even worse, failures in de-identification can cause AI models to unintentionally retain sensitive details, making re-identification possible and complicating compliance with HIPAA when data is stored or shared[1][3][5].

A concerning issue is the lack of oversight. According to HIMSS surveys, nearly half of healthcare organizations don’t have formal AI approval processes, and only 31% actively monitor their AI systems[4]. Real-world examples highlight these risks: in some cases, staff have used public AI tools for tasks like note transcription, inadvertently exposing PHI. This creates "shadow AI" vulnerabilities that bypass established security protocols[5]. These gaps not only increase the risk of breaches but also open the door for more advanced AI-driven cyberattacks.

AI-Powered Cyber Threats

AI adds a new dimension to cyber threats, enabling more sophisticated and adaptive attacks. For example, ransomware now uses machine learning to adjust to defenses, while generative AI makes phishing campaigns more personalized and harder to detect[7][5]. Attackers can also exploit AI through prompt injection, manipulating chatbots or models to extract PHI. Adversarial inputs might even trick diagnostic AI systems, leading to data leaks or clinical errors[5].

These threats are fundamentally different from traditional cyberattacks. Healthcare organizations now face adaptive threats that evolve over time, targeting everything from cloud storage to medical devices and AI-integrated workflows. This expanded attack surface allows for data poisoning, model manipulation, and Data Loss Prevention (DLP) evasion through subtle AI-generated outputs that leak PHI[3][5]. The rise of AI-enabled ransomware was a key driver behind the Department of Health and Human Services' (HHS) January 6, 2025, update to the HIPAA Security Rule, which now requires stricter risk management protocols for AI workflows[1].

Compliance Challenges with AI in Healthcare

AI presents unique challenges to HIPAA compliance that traditional systems don’t. One major issue is the opaque nature of "black box" AI models, which makes auditing difficult and complicates obtaining informed consent - particularly when patient data is used to train algorithms without clear authorization[1][2]. The 2025 HIPAA update has addressed this by removing distinctions between addressable and required safeguards, mandating formal AI risk analyses before deployment[1][3].

Another challenge is algorithmic bias. When training data is unbalanced, AI can produce discriminatory outcomes, violating HIPAA’s principles of fairness and non-discrimination, as well as the guidelines in NIST’s AI Risk Management Framework[1][4]. The lack of explainability in AI systems also complicates maintaining audit trails and demonstrating compliance. managing third-party AI risk through Business Associate Agreements, privacy impact assessments for data flows, and the 60-day breach notification requirement all become more complex when AI is involved[1][2].

Regulatory Frameworks for AI and PHI

Healthcare organizations adopting AI are navigating a rapidly evolving regulatory environment, as AI introduces new risks to Protected Health Information (PHI). On January 6, 2025, the HHS Office for Civil Rights (OCR) proposed a major update to the HIPAA Security Rule - the first in two decades. This update responds to growing ransomware threats and the demand for stronger cybersecurity protections. It eliminates the distinction between "required" and "addressable" safeguards, instead offering specific standards designed for AI-driven PHI workflows[1]. These changes pave the way for more robust technical and administrative strategies, discussed in the following sections.

HIPAA Security Rule Updates and Their Implications

The 2025 HIPAA update compels healthcare organizations to take a fresh look at how they integrate AI into their operations. Both vendors and covered entities must reassess their security measures before embedding AI into clinical or administrative workflows. The goal? To ensure PHI is better protected under the new, stricter standards[1].

Key requirements include implementing comprehensive safeguards and conducting detailed risk analyses, such as mapping PHI flows[2][6]. The update removes the flexibility organizations once had in applying certain safeguards. For example, Business Associate Agreements (BAAs) with AI vendors must now explicitly ensure strong encryption, least privilege access, and continuous system monitoring. Non-compliance carries greater enforcement risks, as the revised rule holds organizations more accountable for PHI breaches in AI workflows[5][6].

AI systems can no longer be treated like traditional IT infrastructure. Instead, they demand specialized risk assessments that account for vulnerabilities unique to AI technologies[1].

NIST AI Risk Management Framework

To complement HIPAA's stricter mandates, the National Institute of Standards and Technology (NIST) provides a framework for managing AI-specific risks. The NIST AI Risk Management Framework (AI RMF) offers detailed guidance that goes beyond HIPAA's focus on PHI protection. It emphasizes principles like validity, reliability, security, explainability, and fairness[1][6]. Using its four core functions - Govern, Map, Measure, and Manage - the framework helps align AI governance with HIPAA's requirements.

Healthcare organizations can use the NIST AI RMF alongside HIPAA by defining AI use cases, mapping PHI flows, and analyzing risks specific to AI systems. This involves aligning AI controls with HIPAA standards and validating them through rigorous testing[1][6]. Additionally, organizations should conduct privacy impact assessments tailored to AI, examining data flows, inference risks, and patient consent processes. Ongoing monitoring of PHI interactions and staff training on AI-related risks are also critical steps[2][6].

How to Reduce AI-Related Risks to PHI

To tackle the risks associated with AI and protected health information (PHI), healthcare organizations need a multi-layered approach. This strategy should blend technical safeguards, administrative protocols, and governance measures to address vulnerabilities unique to AI. It also ensures compliance with the updated HIPAA Security Rule standards, which came into effect in January 2025.

Technical Safeguards for Secure AI Deployment

Securing PHI in an AI environment starts with encryption - both at rest and during transit - using customer-managed keys. A great example is the Microsoft Azure OpenAI Service, which enforces HIPAA business associate agreements (BAAs), private network access, and content filtering to protect AI-generated outputs.

Access control is another key piece. Implementing multi-factor authentication (MFA) and role-based access control (RBAC) ensures that PHI is only accessible to those who absolutely need it. Audit logs provide a trail of every interaction with PHI, while automated anomaly detection can flag unusual access patterns in real time. For secure data integration, AI systems should connect with electronic health records (EHR) via HL7 FHIR standards and OAuth, ensuring real-time synchronization without exposing unnecessary patient details.

Machine learning-powered tools can also scan datasets to identify and de-identify sensitive information, reducing the chances of breaches. Regular audits, penetration testing, data isolation, and rate limiting further enhance the technical security of AI systems. These steps create a strong technical foundation that works hand-in-hand with administrative measures.

Administrative Measures for Risk Management

Managing third-party risk when choosing AI vendors is crucial. Look for vendors with documented security controls, SOC 2 certifications, and strict BAAs that explicitly prevent unauthorized use of data. Conduct privacy impact assessments tailored to AI systems, and provide staff with training on issues like data leakage, algorithmic bias, and incident response.

To stay prepared, healthcare organizations should engage in regular threat modeling and incident response exercises that address AI-specific challenges. Early collaboration with legal and compliance teams ensures accountability throughout the AI model’s lifecycle and helps organizations stay ahead of regulatory changes. When combined with technical safeguards and governance measures, these administrative actions strengthen overall risk management.

Continuous Monitoring and AI Governance

Real-time monitoring, automated anomaly detection, and consistent audit reviews are essential for identifying and addressing irregular access to PHI. Organizations should also have clear incident response protocols in place for handling compromised models, data remediation, and rolling back affected AI systems.

Establishing an AI governance framework is critical. This framework should assign clear responsibilities for PHI security across engineering, cybersecurity, and clinical teams. It should also include policies for addressing algorithmic bias, conducting regular risk assessments, and maintaining transparent reporting mechanisms. Aligning these governance practices with the NIST AI Risk Management Framework - focused on principles like validity, reliability, and privacy - helps ensure long-term compliance as AI technologies and regulations evolve.

Building Scalable PHI Governance with AI

The American Medical Association (AMA) reports that the use of AI in healthcare surged from 38% in 2023 to 66% in 2024 [10]. This rapid adoption presents a growing challenge: safeguarding Protected Health Information (PHI) across increasingly complex data environments. Traditional, manual methods simply can't keep up with the sheer volume of data generated by EHRs, imaging systems, labs, and diagnostic tools. This is where AI-powered automation steps in, providing a more efficient way to discover, classify, and protect PHI. These automated solutions build on earlier discussions about technical safeguards and continuous monitoring.

AI-Driven Automation for Data Management

Natural Language Processing (NLP) allows AI systems to extract PHI from unstructured clinical notes while distinguishing it from similar, non-sensitive content. Through data intelligence platforms, healthcare datasets are automatically scanned, PHI fields are cataloged, and protection measures are suggested - eliminating the need for manual input.

Real-time de-identification pipelines use techniques like masking or tokenization to secure data based on its sensitivity and intended use across various systems. Importantly, modern AI systems excel at contextual classification, enabling them to differentiate between sensitive and non-sensitive data. For example, they can distinguish a patient’s name from a medication name, a critical capability since even small data points - like prescription records or genomic profiles - can inadvertently identify individuals if mishandled [9].

Automated discovery tools, when paired with policy enforcement, ensure consistent application of safeguards. Solutions like Censinet Connect™ Copilot further streamline this by automatically answering security questionnaires and assessments. This kind of automation addresses a major concern: 84% of physicians have called for stronger data privacy measures before fully embracing AI [10]. Additionally, the financial stakes are high - healthcare data breaches averaged $11.07 million per incident in 2024 [10]. These factors make automated governance not just a convenience but a necessity.

Measuring PHI Governance Success

To evaluate the effectiveness of PHI governance, organizations can focus on several key metrics:

- Enterprise-wide automated protection: Ensuring all healthcare data sources are covered by automated safeguards.

- Identification accuracy: Measuring how precisely PHI is detected in unstructured data, reducing false negatives that could lead to data leaks.

- Compliance adherence: Tracking alignment with regulations like HIPAA, aiming for zero non-compliance incidents.

- Audit trail completeness: Confirming that every AI interaction with PHI is logged, providing full traceability for regulatory reviews [9].

These benchmarks provide tangible proof of governance effectiveness, helping organizations justify investments in AI-driven protection. The financial risks are clear: HIPAA violations can result in penalties of up to $1.9 million per violation category [10]. By meeting these measurable goals, organizations can mitigate risks while building trust in their data management practices.

Using Censinet for AI-Powered PHI Risk Management

Almost half of healthcare organizations lack an established AI approval process, and only 31% actively monitor their systems, leaving them vulnerable to breaches of Protected Health Information (PHI) [4]. As AI tools become more integrated into clinical and administrative workflows, this gap in oversight increases the risk of exposure. Censinet RiskOps™ steps in to tackle this issue by offering a centralized platform tailored to the complex risk environment of healthcare. It covers everything from assessing third-party vendors to securing medical devices.

Faster Risk Assessments with Censinet RiskOps™

Traditional vendor risk assessments can drag on for weeks, creating delays and inefficiencies. Censinet RiskOps™ changes the game by drastically reducing this timeline - vendors can now complete security questionnaires in seconds. The platform automates key steps, including summarizing vendor evidence, capturing integration details, identifying fourth-party risks, and producing concise risk reports.

This level of automation ensures that unauthorized AI tools don’t slip past IT oversight [5]. By centralizing vendor vetting and documenting workflows in one platform, healthcare organizations can effectively block unapproved AI tools from accessing patient data. Additionally, the platform supports AI-specific privacy impact assessments by mapping how PHI flows through systems and applying Data Loss Prevention (DLP) filters to secure sensitive information in AI prompts and outputs [3]. These streamlined assessments work hand-in-hand with ongoing technical safeguards and continuous monitoring strategies.

AI Governance and Collaboration with Censinet

Censinet goes beyond technical safeguards to strengthen AI governance at an organizational level. Acting as a centralized hub, the platform facilitates collaboration by directing assessment results and assigning specific tasks to key stakeholders - whether privacy officers, clinical leaders, or IT security teams. For instance, when a new AI vendor needs evaluation or an existing system shows anomalies, Censinet automatically routes these tasks to the appropriate team members.

The platform’s AI risk dashboard provides a real-time, unified view of all AI-related policies, risks, and tasks. This approach aligns with the principles outlined in the NIST AI Risk Management Framework, focusing on areas like validity, reliability, security, and privacy [1][6]. Healthcare organizations can use the dashboard to monitor PHI leakage rates, flag access anomalies, and track compliance metrics. It also maintains detailed audit trails for every AI interaction with patient data, which is critical for meeting HIPAA’s 60-day breach notification requirement [3]. This centralized governance model supports the broader goal of balancing AI adoption with strengthened protections for PHI.

Conclusion

AI is transforming healthcare delivery, but it also brings new vulnerabilities to Protected Health Information (PHI) that demand immediate attention. Striking a balance between progress and security isn't just a choice - it’s a regulatory and ethical responsibility. Healthcare organizations that neglect to integrate privacy safeguards into their AI governance frameworks face the risk of HIPAA violations and a loss of patient trust.

The way forward calls for preventive strategies, not just reactive fixes. For example, conducting AI-specific privacy impact assessments and implementing continuous monitoring are critical steps [1]. These actions help organizations stay ahead in adopting responsible AI while ensuring compliance with evolving standards, such as the HIPAA Security Rule update proposed for January 6, 2025, and the NIST AI Risk Management Framework [1][8].

Effective governance ensures that AI improves patient care without undermining HIPAA protections [2]. This includes applying strong encryption, enforcing strict access controls, securing vendor agreements, and conducting adversarial testing. The move toward "secure by design" principles in AI medical devices and vendor management highlights the importance of supply chain transparency and lifecycle oversight [8].

Platforms like Censinet RiskOps™ further simplify these governance challenges. By centralizing risk management, automating assessments, and providing real-time visibility into AI-related risks, Censinet RiskOps™ enables healthcare organizations to manage PHI governance efficiently without compromising speed or accuracy. This approach ensures that healthcare providers can scale their security efforts while maintaining trust and compliance.

FAQs

Where does PHI leak in AI workflows?

Protected Health Information (PHI) can unintentionally leak in AI workflows due to several vulnerabilities. Common issues include misconfigured access controls, the use of unapproved third-party tools, or the presence of shadow AI systems - AI tools implemented without proper authorization or oversight.

Other risks arise from data poisoning, where malicious actors manipulate training data, and the re-identification of anonymized data, exploiting weaknesses in anonymization techniques. Additionally, inadequate governance can leave sensitive data exposed, especially when security protocols and oversight are lacking.

These leaks often happen when AI systems handling PHI don't have robust security measures in place, leading to the unintended exposure of patient information. Such gaps highlight the importance of strict controls and oversight in safeguarding sensitive healthcare data.

What does the 2025 HIPAA update require for AI?

The 2025 HIPAA update introduces tougher measures designed to tackle the risks AI poses to Protected Health Information (PHI). These measures include:

- Mandatory encryption: All PHI must be encrypted to ensure data remains secure, even if intercepted.

- Multi-factor authentication (MFA): Access to systems handling PHI will require MFA, adding an extra layer of security beyond just passwords.

- Continuous monitoring of AI systems: Regular oversight will help detect and prevent data breaches, re-identification of individuals, and any unauthorized sharing of PHI.

These updates aim to strengthen safeguards around sensitive health data as AI becomes more integrated into healthcare systems.

How can we stop 'shadow AI' use by staff?

To keep staff from turning to unapproved "shadow AI" tools, healthcare organizations need to take a proactive approach. Start by developing clear policies and setting up governance frameworks that outline what tools are acceptable and how they should be used.

Using centralized platforms like Censinet RiskOps™ can make a big difference. These platforms help you monitor AI tools across the organization, ensure compliance with regulations, and track how these tools are being used.

It’s also critical to educate your staff. Provide training on the potential risks of shadow AI, especially how it can compromise the security and privacy of Protected Health Information (PHI). Finally, conduct regular audits to spot unauthorized tools early and address any issues before they escalate. These steps can significantly reduce security and privacy risks while maintaining trust in your systems.