AI Liability Risks in Healthcare: What to Know

Post Summary

AI in healthcare is advancing quickly, but with it comes legal and ethical challenges - especially around liability. Here's what you need to know:

- Liability Concerns: AI developers often avoid responsibility for errors, leaving hospitals and providers accountable.

- Diagnostic Errors: AI-related mistakes, like misdiagnoses, are driving malpractice claims. Physicians face risks whether they follow or reject AI recommendations.

- State Law Variations: Regulations differ widely across states, adding confusion around compliance and responsibility.

- Transparency Issues: AI's "black box" nature makes it hard to assign blame for errors, creating uncertainty for providers.

- Governance Challenges: Healthcare systems must monitor AI performance and maintain human oversight to prevent errors.

To reduce risks, healthcare providers should focus on clear oversight, proper training, and strong contracts with AI vendors. Balancing innovation with accountability is key to ensuring patient safety while using AI tools.

Michelle Mello | Understanding Liability Risk from Healthcare AI Tools

Managing these liabilities is critical as cyberattacks increasingly impact patient care and clinical operations.

sbb-itb-535baee

Current Trends in AI Liability Risks

AI Liability in Healthcare: Mock Jury Study Results and Key Statistics

The legal framework around AI in healthcare is evolving quickly, presenting new challenges for providers who must navigate a maze of accountability. Research highlights that liability is divided among physicians (for professional negligence), hospitals (for vicarious negligence), and AI developers (for product liability) [4]. This division makes it harder to pinpoint responsibility when issues arise.

AI Diagnostic Errors and Malpractice Claims

AI diagnostic errors are becoming a significant driver of malpractice claims. In fact, the misuse of AI chatbots was flagged as a top health tech hazard in 2026 [4]. One contributing factor is automation bias, where clinicians tend to trust AI recommendations even if they conflict with their own judgment [4][3].

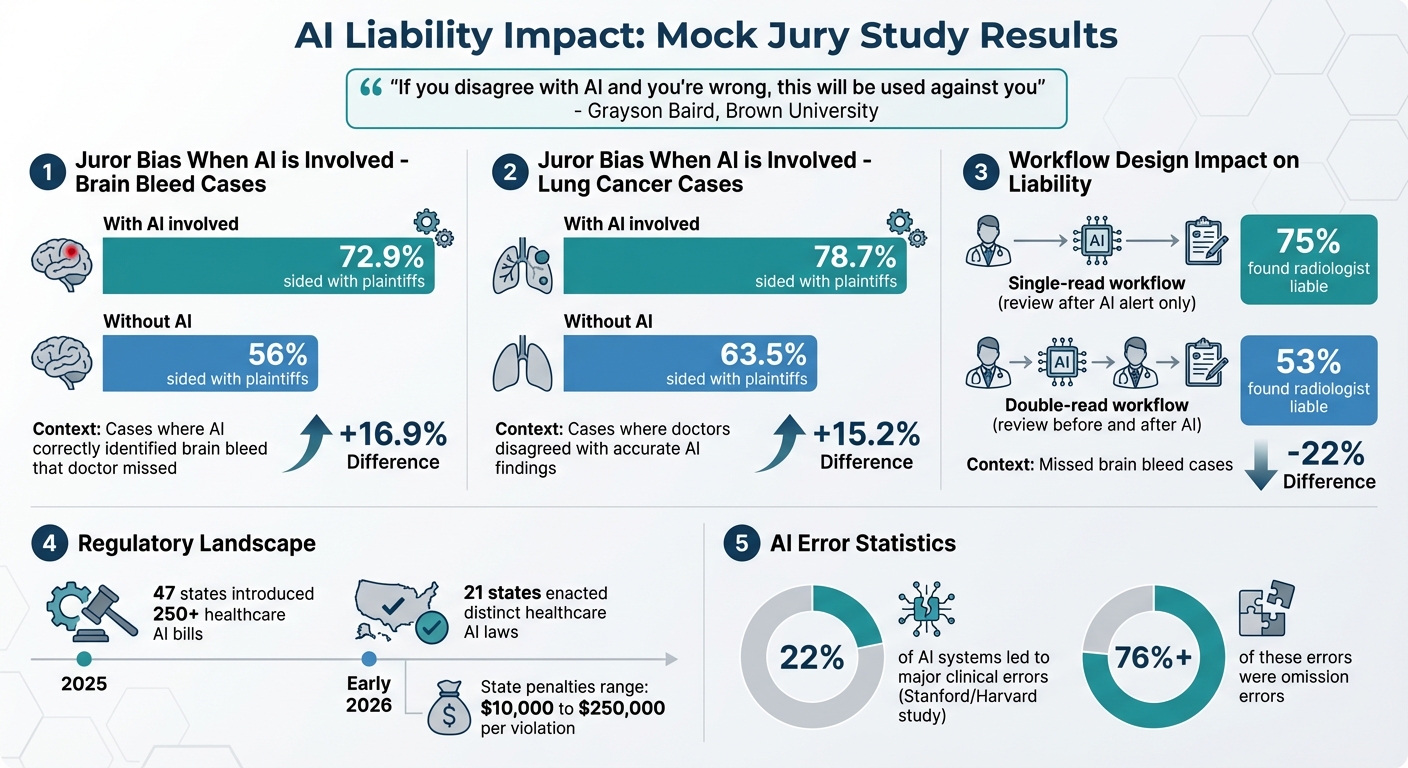

The legal impact of these errors is striking. Mock jury studies reveal that jurors sided with plaintiffs more often when AI was involved. For example, in cases where AI correctly identified a brain bleed that a doctor missed, jurors supported plaintiffs 72.9% of the time, compared to 56% when no AI was used. Similarly, in lung cancer cases, jurors favored plaintiffs 78.7% of the time when doctors disagreed with accurate AI findings, compared to 63.5% without AI [4].

Grayson Baird, Associate Professor of Radiology at Brown University, explains the dilemma facing physicians:

"If you disagree with AI and you're wrong, this will be used against you" [5].

Doctors are caught in a tough spot: they risk liability whether they follow or reject AI recommendations [4]. This challenge is compounded by AI "hallucinations", where generative AI models fabricate nonexistent anatomy or suggest unnecessary, even harmful, tests [4].

Workflow design plays a critical role in liability cases. Jurors are more likely to side with plaintiffs when a radiologist reviews a scan only once after an AI alert, compared to a "double-read" workflow where the scan is reviewed both before and after AI feedback. In one study involving a missed brain bleed, 75% of mock jurors found a radiologist liable with a single review, but this dropped to 53% with a double-read approach [5].

AI systems now generate detailed audit logs that document every input and timestamp. Plaintiffs increasingly use these logs to argue that physicians spent insufficient time reviewing AI-generated notes or ignored high-confidence warnings [4]. These developments are adding layers of complexity to state laws and liability determinations.

State Laws and Legal Uncertainty

The regulatory environment for healthcare AI is fragmented. In 2025 alone, 47 states introduced more than 250 healthcare-specific AI bills. By early 2026, 21 states had enacted distinct healthcare AI laws [8].

State regulations vary significantly. Some states allow private lawsuits for AI-related harm, while others restrict actions to state authorities, with penalties ranging from $10,000 to $250,000 per violation [6]. For instance, Illinois prohibits AI from making independent therapeutic decisions, whereas Texas permits AI diagnostic recommendations if reviewed by a practitioner and disclosed to patients [6]. Colorado's SB 24-205, effective June 30, 2026, mandates healthcare organizations to conduct impact assessments and notify patients when "high-risk" AI is used in critical decisions [8].

Efforts to create federal uniformity have faced challenges. A December 2025 Executive Order aimed at standardizing national AI policy lacks the authority to override state laws - only Congress can enact such preemptive legislation [7]. The Husch Blackwell Healthcare Privacy and Security Work Group commented:

"The EO cannot invalidate state law. In the meantime, the healthcare industry faces a fragmented and highly varied regulatory landscape from state to state" [7].

Even basic definitions vary across states. Colorado's broad definition of "high-risk" AI and Texas's vague requirement for "practitioner review" leave healthcare providers uncertain about compliance [6]. Juan Pablo Montoya, Founder & CEO of SolumHealth, critiques the current approach:

"We're writing rules for yesterday's technology and calling it governance" [8].

This regulatory patchwork complicates transparency and makes it harder to assign liability.

Algorithm Transparency and Liability Determination

The opaque nature of AI decision-making makes liability assignment a murky process. It’s often unclear whether responsibility for AI errors lies with the physician, the healthcare facility, the AI developer, or the device manufacturer [3]. This has created what some clinicians refer to as a "liability sink", where they are held accountable for algorithmic outputs they cannot fully understand or verify [8].

Dr. Adriana Banozic-Tang, Visiting Fellow at the Centre for Digital Law at Singapore Management University, sheds light on the operational hurdles:

"Health systems implementing AI-enabled home monitoring find that technical performance alone does not determine adoption... The barrier is procedural: healthcare providers consistently raise questions about who responds when the algorithm flags a risk" [3].

In response, regulators have classified many clinical AI tools as "high-risk" systems. Both the EU AI Act and Colorado's SB 24-205 now require mandatory impact assessments and human oversight for these tools [3]. The EU AI Act, in particular, categorizes all AI systems used in medical devices as "high-risk", requiring detailed risk management and extensive technical documentation [3].

This lack of clarity is driving clinicians toward defensive practices. Some over-order tests to confirm AI findings, while others avoid using AI altogether to minimize risk [3]. These trends highlight the growing need for robust risk management strategies in healthcare AI.

AI Governance and Oversight Challenges

Navigating governance challenges is a critical step in addressing the liability risks tied to AI systems in healthcare. One of the biggest hurdles is managing AI systems after they’ve been deployed. Unlike static tools, AI models can experience performance drift, meaning their accuracy and reliability may degrade over time. This makes ongoing human oversight essential to catch errors before they jeopardize patient safety [10]. Adding to the difficulty, many AI platforms operate like black boxes, making it tough to document decisions or maintain proper audit trails [9].

Generative AI systems bring their own set of issues. These tools can sometimes produce fabricated or misleading outputs, which, if not carefully monitored, could result in incorrect treatment plans [10]. Compounding the problem, healthcare organizations often rely on third-party AI tools. Even when they don’t have access to the vendor’s training data or algorithms, they still assume liability for the tool’s outcomes [2][9]. As Traverse Legal points out:

"Once an AI system filters or rejects treatment, legal accountability shifts from intent to impact" [9].

The regulatory landscape only adds to the complexity. For instance, Virginia's H 2154 requires hospitals to establish formal policies for AI personal assistants, California's SB 1120 mandates that licensed providers - not algorithms - make decisions about medical necessity, and Utah's HB 452 requires healthcare professionals to disclose when generative AI is used during patient care [10]. On top of that, the Department of Justice has started issuing subpoenas to digital health companies to investigate whether generative AI in electronic medical records contributes to unnecessary medical care [10]. Morgan Lewis warns that healthcare organizations are at risk:

"Failure to conduct routine monitoring or audits could leave healthcare organizations vulnerable to claims that the organization... knowingly ignored (through deliberate ignorance or reckless disregard) red flags" [10].

Given these challenges, healthcare organizations need strong, multidisciplinary oversight to ensure AI systems are functioning reliably and safeguarding patient care.

Continuous Monitoring After Deployment

Keeping an eye on AI systems after they’re deployed is non-negotiable. Regular monitoring can help detect performance drift, address biases caused by poor training data, and maintain detailed logs of AI decisions and overrides. These practices are essential for protecting organizations under laws like the False Claims Act [2][10]. Multidisciplinary governance committees - made up of legal, IT, clinical, and risk management experts - are instrumental in vetting AI tools and keeping an eye out for bias [2][10].

Human-in-the-loop protocols are another vital safeguard. Every AI-driven denial or clinical recommendation should have a clear process for escalation to a qualified human reviewer [9][10]. This not only reduces legal risks but also ensures that human clinical judgment remains central to patient care. Additionally, requiring vendors to meet certifications like SOC 2 or ISO 27001 - and negotiating contracts that include audit rights and clearly assign liability - can further enhance oversight [2]. Leveraging risk management frameworks, such as the NIST AI Risk Management Framework or ISO standards, provides a structured approach to responsible AI deployment and monitoring [2]. These practices are just as important for insurers, who face similar challenges in making AI-driven claims decisions transparent and accountable.

AI in Insurance Claims Processing

The insurance industry is also feeling the impact of AI, particularly in claims processing. Insurers are increasingly using AI to evaluate claims, but this shift has sparked legal disputes. For example, class action lawsuits have been filed against insurers accused of using algorithms to override physicians' decisions about medical necessity [10]. These disputes often arise in areas like oncology, behavioral health, and rare diseases, where AI tools may struggle to handle complex or uncommon medical cases [9][10].

Courts are starting to view AI systems as active decision-makers, meaning insurers must take full responsibility for their outputs [9]. As Traverse Legal explains:

"Lack of transparency converts each denial into a legal vulnerability" [9].

When documentation is inconsistent or review processes are unclear, insurers open themselves up to liability claims [9]. To reduce these risks, insurers need clear escalation pathways so human reviewers can overturn automated decisions and provide detailed explanations [9]. Internal teams must also be able to explain how AI systems make decisions and keep thorough records to defend against lawsuits [9]. Even when using third-party algorithms, insurers remain liable, making it crucial to have contracts that enforce audit rights, demand transparency, and clearly define liability.

How to Reduce AI Liability Risks

Healthcare organizations can minimize liability risks tied to AI by focusing on transparency and accountability throughout its integration. The goal is to harness AI's potential while ensuring strong human oversight and clear lines of responsibility at every step.

Building Transparency and Accountability

It's crucial to document every aspect of AI system selection, implementation, and integration. This includes third-party vendor risk assessments, physician validation, and obtaining informed consent for data collection. Such thorough records demonstrate adherence to proper standards of care.

Physicians remain legally responsible for decisions made with AI assistance [12]. This means every AI-generated recommendation must be carefully reviewed to confirm its accuracy and potential benefit for the patient before being implemented.

Starting with low-risk applications is a smart approach. For instance, using AI in administrative tasks or back-office operations typically involves less liability than in diagnostic or treatment-related areas. This allows staff to gain experience with AI systems before introducing them into more critical clinical roles.

Establishing clear escalation processes is another key step. Every AI recommendation should undergo human review, with mechanisms in place to override the system when necessary. Additionally, feedback channels are essential, enabling clinicians to report errors or unexpected outcomes. A study by Stanford and Harvard revealed that AI systems led to major clinical errors in 22% of cases, with omission errors accounting for over 76% of these mistakes [11].

Using Risk Management Platforms

Centralized risk management platforms can simplify AI oversight. Tools like Censinet RiskOps™ allow healthcare organizations to thoroughly evaluate AI vendors and systems through comprehensive risk assessments. These platforms ensure HIPAA compliance, validate AI performance, and monitor adherence to changing regulations, all within a single hub. This streamlined approach enhances oversight and aligns with established escalation protocols.

Automated workflows within these platforms can further close oversight gaps. For example, Censinet AITM speeds up risk assessments by summarizing vendor documentation, capturing integration details, and identifying risks from third-party vendors. This "human-in-the-loop" model improves efficiency while keeping critical decisions firmly under expert control.

Collaboration features on these platforms also improve vendor accountability. Joint risk assessments can address concerns related to patient data, clinical applications, and medical devices. Advanced routing ensures that key findings are reviewed and acted upon by the right stakeholders without delay.

Adapting Clinical Workflows for AI

To complement risk management efforts, clinical workflows must prioritize physician judgment. AI should act as a support tool, not a replacement for clinical decision-making. Clear protocols should define when and how AI can be used, and staff must receive training on its strengths, limitations, and appropriate applications.

Active physician involvement is essential during AI implementation, especially in the early stages. This hands-on approach helps identify potential issues and ensures seamless integration with existing workflows. Regular evaluations of AI error risks, particularly in high-stakes scenarios, are also critical [12].

Patient communication is another cornerstone of accountability. Informed consent procedures should clearly explain when AI is being used in their care, emphasizing that final decisions rest with qualified healthcare professionals [13]. Transparent communication not only builds trust but also reduces liability concerns if questions arise about AI's role in treatment decisions.

Conclusion

The risks tied to AI in healthcare demand thoughtful and proactive planning. A review of over 800 tort cases suggests that courts might handle AI-related injuries similarly to those caused by traditional software, often placing the malpractice burden on providers and hospitals [1][2].

Effective governance is essential for all AI applications in healthcare. Cross-functional committees - including legal, clinical, and IT experts - can help uncover potential risks before they lead to patient harm or regulatory violations. As Professor Michelle Mello from Stanford University aptly points out:

"If liability concerns are standing in the way of adoption of technology that could help patients, we need to understand whether that concern is really proportionate to the risk" [1].

Risk assessments should be tailored to the specific application of AI. High-risk tools, like diagnostic AI, require more stringent oversight compared to lower-risk administrative systems. Using structured frameworks can strengthen accountability and improve the governance of AI implementation [2].

Beyond internal assessments, holding vendors accountable is equally crucial. Healthcare organizations should push back on vague liability disclaimers and negotiate contracts with clear indemnification clauses to ensure developers take responsibility for model errors. Contracts should also include provisions to prevent vendors from using one organization's protected health information to train models for other clients [2].

Striking a balance between innovation and accountability is key to safeguarding patient safety. Organizations that adopt strong governance practices early on, maintain transparent oversight, and use centralized risk management and TPRM tools will be better equipped to handle the challenges of AI in healthcare. For instance, platforms like Censinet RiskOps™ can streamline risk assessments and provide continuous oversight, ensuring AI technologies are implemented responsibly and effectively. These efforts not only protect patients but also allow innovation to thrive in a controlled and safe environment.

FAQs

Who is legally responsible when an AI tool harms a patient?

Liability for harm caused by an AI tool varies based on the specific situation. The responsibility could fall on healthcare providers using the AI, the developers who designed it, or the organizations managing its deployment. Determining legal accountability for medical errors involving AI is a complicated issue that is still developing. This underscores the importance of establishing clear policies and proper oversight within healthcare environments.

How can clinicians avoid liability when AI recommendations conflict with their judgment?

Clinicians can lower liability risks by staying directly engaged in decision-making, even when incorporating AI tools. To do this, it's essential to adopt a human-in-the-loop approach, ensuring oversight and control over AI-assisted processes. Additionally, tasks like obtaining informed consent should always be managed personally. Courts typically assess actions based on what a "reasonable physician under similar circumstances" would have done, whether or not AI was involved.

What governance steps should hospitals take to monitor AI after deployment?

Hospitals need solid governance frameworks to manage AI safely, ethically, and in compliance with regulations. Here’s how they can achieve that:

- Create oversight committees: Form cross-functional teams that bring together experts from various departments to oversee AI implementation and usage.

- Track and monitor AI systems: Keep an up-to-date inventory of all AI tools in use and conduct continuous monitoring to identify potential risks.

- Conduct regular audits: Schedule routine evaluations to ensure AI systems meet safety, ethical, and compliance standards.

- Plan for incidents: Develop response plans to handle issues like system failures, data breaches, or unexpected outcomes.

- Enforce human oversight: Ensure that human decision-makers remain involved, especially in critical situations, to mitigate risks like bias or cybersecurity vulnerabilities.

By combining structured policies with reliable tools, hospitals can maintain effective governance and address the challenges that come with AI in healthcare.