NIST De-Identification for AI in Healthcare

Post Summary

Healthcare is at a crossroads: how do we use AI to improve patient care while protecting privacy? The answer lies in NIST's de-identification guidelines, which provide a structured way to anonymize patient data for AI training without risking privacy breaches. These methods comply with HIPAA and reduce legal risks for healthcare organizations through unified risk management.

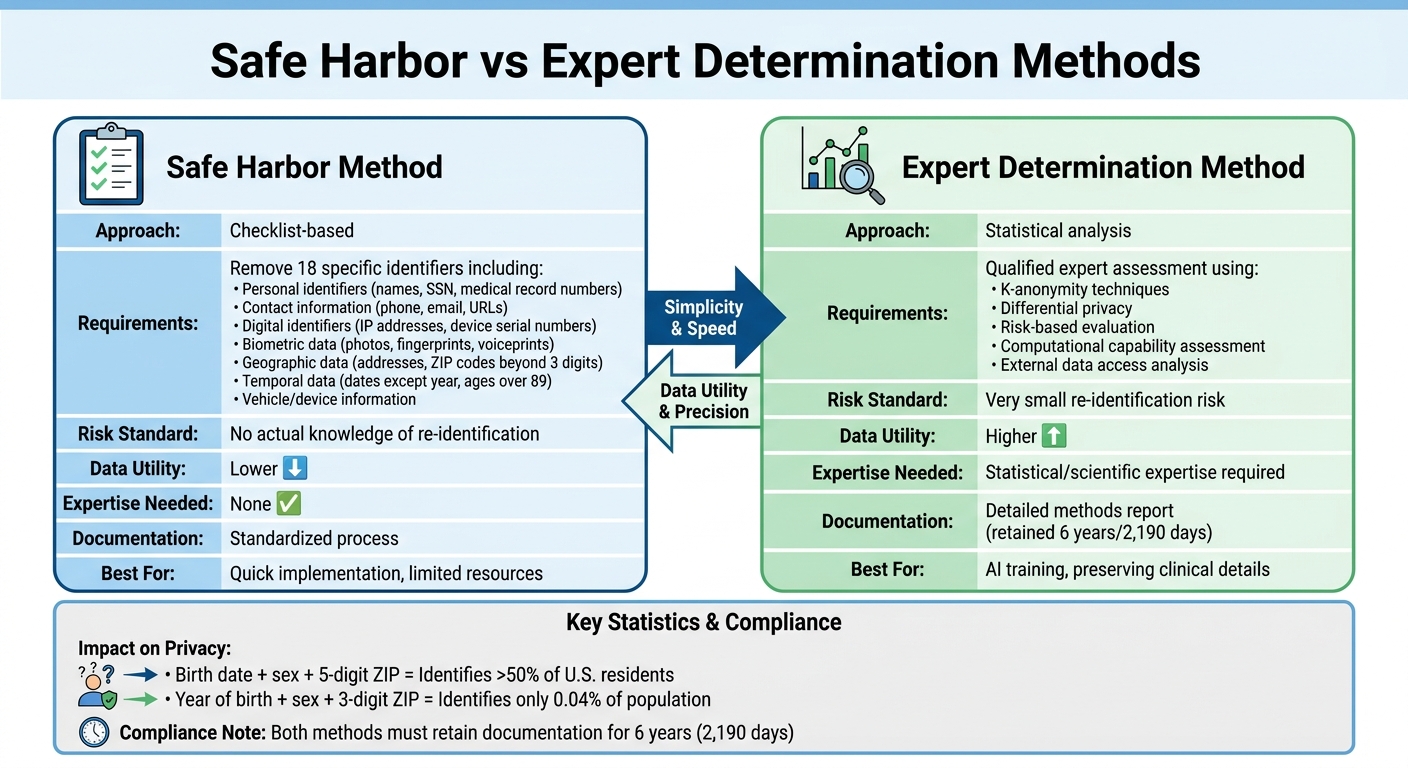

Here’s the key takeaway: NIST offers two main approaches - Safe Harbor and Expert Determination. Safe Harbor is simpler but limits data utility by removing 18 identifiers. Expert Determination, though more complex, retains more data for better AI training by assessing re-identification risks with advanced techniques like k-anonymity and differential privacy. Both methods aim to balance data usability with privacy protection.

Why does this matter? AI in healthcare could save the U.S. up to $360 billion annually, but privacy concerns keep data siloed. These silos often stem from risks to patient care caused by potential data breaches. NIST’s guidelines help healthcare providers share de-identified data safely, enabling breakthroughs in AI-driven patient care.

Quick Facts:

- Safe Harbor: Checklist-based, easy to implement, but reduces data detail.

- Expert Determination: Requires statistical expertise but keeps more useful data.

- NIST SP 800-188: Finalized in 2023, adds advanced techniques to HIPAA standards.

- Potential Savings: $200–$360 billion annually through AI in healthcare.

De-Identification in Multimodal Medical Data (Text, PDF, DICOM) | The Healthcare AI Podcast (Ep. 2)

This episode explores the complexities of removing 18 HIPAA identifiers from diverse medical datasets.

sbb-itb-535baee

HIPAA De-Identification Methods and NIST Standards

HIPAA Safe Harbor vs Expert Determination De-Identification Methods Comparison

Navigating the fine line between maintaining patient privacy and ensuring data usability, HIPAA's de-identification methods - aligned with NIST standards - are crucial for advancing AI in healthcare.

HIPAA provides two options for de-identification: the Safe Harbor method, which follows a checklist approach, and the Expert Determination method, which relies on statistical analysis. NIST standards serve as a technical guide to effectively implement these methods. The choice between them has a direct impact on AI development. Safe Harbor offers simplicity and requires no specialized knowledge, while Expert Determination retains more clinical details, making it better suited for training precise AI models. As noted in HHS guidance:

"both methods, even when properly applied, yield de-identified data that retains some risk of identification. Although the risk is very small, it is not zero" [5].

Below, we'll explore how these methods work and their implications for AI in healthcare.

Safe Harbor Method

The Safe Harbor method provides a straightforward checklist: remove 18 specific identifiers from health data, and it qualifies as de-identified under HIPAA. This approach requires no advanced statistical expertise. The identifiers to be removed include:

- Personal identifiers: Names, Social Security numbers, medical record numbers, account numbers, and similar unique codes.

- Contact information: Phone numbers, fax numbers, email addresses, and URLs.

- Digital identifiers: IP addresses, device serial numbers, and similar data.

- Biometric data: Photographs, fingerprints, voiceprints, and comparable identifiers.

- Geographic data: Details smaller than a state, such as street addresses and ZIP codes (except the first three digits if the population exceeds 20,000).

- Temporal data: Dates like birth, admission, discharge, and death (except the year), as well as ages over 89.

- Vehicle and device information: Vehicle identifiers, serial numbers, and license plate numbers [5].

Compliance is achieved when these identifiers are removed, and there’s no actual knowledge of re-identification risk. However, this rigid approach limits data usefulness. For example, research highlights that date of birth, sex, and 5-digit ZIP code uniquely identify over 50% of U.S. residents [5]. Reducing this to year of birth, sex, and 3-digit ZIP code lowers the uniqueness to just 0.04% of the population [5]. While this minimizes re-identification risk, it also removes important clinical details that AI models often need.

Expert Determination Method

The Expert Determination method uses a risk-based approach. Instead of following a fixed checklist, a qualified expert applies statistical and scientific principles to ensure the risk of re-identification remains "very small." According to HIPAA, this expert must:

"appropriate knowledge of and experience with generally accepted statistical and scientific principles"

and must document both the methods used and the results that justify the determination [5].

Unlike Safe Harbor, this method allows for retaining more data utility by using techniques like k-anonymity and differential privacy, which are supported by NIST guidelines. This flexibility enables the inclusion of partial dates or detailed geographic data, which are often critical for AI training. The expert evaluates factors like computational capabilities, external data access, and the timeframe for which the data will remain protected [5].

The downside is complexity. Managing these complexities often requires robust third-party risk management to ensure all partners handle de-identified data securely. Safe Harbor can be implemented without specialized knowledge, but Expert Determination requires expertise and thorough documentation. For AI projects where temporal or geographic data plays a key role, this method often delivers better results. HIPAA mandates that all de-identification documentation be retained for six years (2,190 days) from the date of creation or its last effective use [4].

| Feature | Safe Harbor Method | Expert Determination Method |

|---|---|---|

| Approach | Checklist-based | Statistical analysis |

| Requirements | Remove 18 identifiers | Qualified expert assessment |

| Risk Standard | No actual knowledge of re-id | Very small re-id risk |

| Data Utility | Lower | Higher |

| Expertise Needed | None | Statistical/scientific |

| Documentation | Standardized process | Detailed methods report |

NIST Guidelines on De-Identification Processes

NIST views de-identification as a flexible framework rather than a rigid checklist, aiming to reduce re-identification risks while maintaining the usefulness of data for specific purposes [1][6]. This approach allows healthcare organizations to tailor the guidelines to their unique AI development needs. As NIST researcher Simson L. Garfinkel puts it:

"De-identification thus attempts to balance the contradictory goals of using and sharing personal information while protecting privacy" [1].

This balancing act is especially important in AI projects, where the quality of training data directly affects the performance of models. NIST acknowledges that achieving zero risk is nearly impossible; instead, it focuses on reducing risk to an acceptable level. Their guidelines emphasize an iterative process: assess the risk of identification, apply techniques such as generalization or suppression, and re-evaluate until the data meets the desired risk threshold [5]. This iterative workflow is particularly suited to the evolving needs of healthcare AI.

Core Principles of NIST De-Identification

NIST builds on HIPAA's framework by introducing principles that are especially relevant for AI applications. These principles - replicability, data source availability, and distinguishability - help guide risk assessments [5].

- Replicability refers to how consistently a feature is tied to an individual.

- Data source availability considers whether external datasets could contain identifiers that match the data.

- Distinguishability measures how much a subject's data stands out within the dataset.

For example, stable demographics like birth dates pose a higher risk of re-identification compared to variable clinical data, such as blood glucose levels. Healthcare organizations should focus on protecting features that are stable, publicly available, and highly distinctive [5]. These high-risk features require extra safeguards to ensure privacy.

Additionally, organizations must document the methods and results used to demonstrate that re-identification risk is "very small" [5]. This documentation, which must be retained for six years (2,190 days), serves as proof of compliance during audits. Given the rapid pace of technological advancements, experts should also consider time-limited certifications that account for potential future risks from evolving computational capabilities [5].

Balancing Data Utility and Privacy

One of the biggest challenges in AI development is the trade-off between de-identification and the quality of data. Reducing identifiable information often results in some loss of detail, which can limit the dataset's usefulness for training AI models [5]. To address this, NIST supports the Expert Determination method, which allows for customized solutions. Unlike the rigid Safe Harbor method, which mandates the removal of 18 specific identifiers, Expert Determination enables the creation of multiple versions of de-identified datasets tailored for different purposes. For instance:

- A dataset for geographic AI analysis might retain detailed geocodes but use generalized age data.

- Another dataset for demographic modeling might include fine-grained age details but generalized geocodes [5].

This adaptability is crucial because, as NIST points out, "Data is the fuel, and AI is the engine" [2]. Poor data quality can significantly weaken AI performance [2]. The goal is to apply de-identification strategies that preserve critical information for specific AI tasks while keeping re-identification risks acceptably low.

Healthcare organizations can further protect privacy by implementing data use agreements when sharing de-identified datasets for AI training [5]. These agreements help prevent the merging of multiple de-identified datasets, which could compromise privacy. Another option is assigning unique codes to de-identified records for internal re-identification purposes. These codes must not be derived from the individual's information, and the re-identification process must remain confidential [5]. These measures help organizations prepare for advanced de-identification techniques tailored to AI workflows.

How to Implement NIST-Compliant De-Identification in Healthcare AI

Implementing NIST-compliant de-identification requires a structured and iterative approach that balances privacy with data utility for AI training. The process involves identifying sensitive data, applying tailored techniques, and rigorously validating the results. Healthcare organizations should treat this as an ongoing workflow, continuously reassessing risks as datasets expand or new external data sources become available. These initial steps are critical for applying effective de-identification strategies suited to AI.

Preparing Datasets for De-Identification

Before diving into de-identification, it's essential to identify and classify all sensitive data elements. This includes structured databases, free-text clinical notes, and medical images [1]. Key risk factors to consider include:

- Replicability: How easily the data can be matched to external sources.

- Data source availability: The likelihood of external data being used to re-identify individuals.

- Distinguishability: The uniqueness of specific data points.

High-risk features, like stable demographic attributes (e.g., date of birth or ZIP code), require extra attention compared to variable clinical data. Prioritizing these high-risk elements during the planning phase ensures stronger protection.

De-Identification Techniques

Once high-risk elements are identified, specific de-identification methods can be applied. NIST endorses techniques that balance reducing re-identification risks with retaining the data's utility. For example, the Expert Determination method allows organizations to keep partial dates or generalized geographic information, provided a qualified expert confirms that the re-identification risk is "very small" [5][4]. This is particularly useful for AI, as it preserves the contextual richness needed for effective model training.

Modern AI workflows often use hybrid tokenization. Sensitive data is replaced with tokens before being processed by AI systems, while the original Protected Health Information (PHI) is stored securely in an encrypted mapping [4]. For unstructured clinical text, advanced Named Entity Recognition (NER) models outperform traditional regex-based methods. Tools like Microsoft Presidio, Amazon Comprehend Medical, and John Snow Labs' Spark NLP for Healthcare are excellent options for detecting PHI in text [4].

When working with cloud-based AI providers like OpenAI or Anthropic, organizations should enable zero-retention mode, ensuring that no data is stored after processing [4]. Additionally, implementing envelope encryption (AES-256) with a Key Management Service (KMS) ensures that PHI token mappings are stored separately from encrypted datasets [4].

Testing De-Identification Effectiveness

Validating de-identification measures is a critical step. This involves three main tasks: evaluating potential re-identification risks, applying necessary mitigation techniques, and reassessing risks [5]. Organizations must also consider whether external data sources, like voter registries or public databases, could be used to re-identify de-identified records [5]. Regular testing helps refine the process and ensures compliance with NIST standards.

Experts often validate de-identification measures for a specific timeframe, accounting for advancements in computational capabilities and access to external data sources [5]. If re-identification codes are used to allow future linking, these mechanisms must be securely stored and kept separate from the de-identified datasets. They should also never be disclosed [5]. This ensures that the process remains secure and aligned with privacy guidelines as both technology and data landscapes evolve.

Challenges and Best Practices for NIST De-Identification in AI

De-identification in healthcare AI often grapples with a tough balancing act: protecting privacy while preserving data utility. For instance, cases involving unique demographic details may require adding more noise to the data, which can negatively affect the accuracy of AI models. NIST scientist Gary Howarth highlights this challenge:

"There is no simple answer for how to balance privacy with usefulness. You must answer that every time you apply DP [Differential Privacy] to data" [7].

Adding to the complexity, organizations frequently face difficulties in verifying vendor claims about de-identification software due to a lack of standardized evaluation methods. However, with the introduction of NIST SP 800-226 in March 2025, differential privacy guarantees will have a standardized evaluation process using interactive tools and sample code [7]. These developments provide a foundation for addressing risks and refining strategies.

Managing Re-Identification Risks

Re-identification risks are a moving target, shaped by the emergence of new external datasets and advances in computational power. AI systems are particularly susceptible to adversarial attacks, where data is manipulated to compromise anonymity [8]. These risks increase when de-identified datasets are combined with external sources like voter registries or public databases [1].

To address these risks, healthcare organizations should consider forming a Disclosure Review Board (DRB) to oversee de-identification efforts and conduct regular re-identification studies [3]. Using measurable privacy standards, such as k-anonymity or differential privacy, offers stronger protection compared to basic data masking approaches [1][3]. Additionally, the NIST AI Risk Management Framework provides a structured approach with its four core functions - Govern, Map, Measure, and Manage. This framework, shaped by input from over 240 organizations, helps organizations pinpoint where AI is deployed and assess risks based on their severity and likelihood [8].

Tools like Censinet RiskOps™ can assist by automating and monitoring de-identification processes, ensuring compliance and maintaining data integrity.

Preserving Data Quality for AI Training

While managing re-identification risks is crucial, ensuring high-quality data is just as important for effective AI training. AI models rely on data that is accurate, complete, consistent, relevant, and timely [2]. One way to enhance data-sharing practices is by moving away from publishing raw de-identified files. Instead, organizations can offer secure query interfaces or create non-public protected enclaves, which allow researchers controlled access to detailed data [3]. Another effective approach is generating synthetic data modeled on identified datasets, enabling AI training without exposing real records [3].

To maintain data quality, automated tools like NIST's Quality of Data-at-Rest (qDAR) can help by identifying inconsistencies and ensuring records meet defined thresholds before being used for AI training [2]. Regular technical audits are also critical to sustaining AI performance and ethical standards. Furthermore, organizations must carefully manage de-identification processes to avoid introducing or amplifying biases, which could lead to unfair or discriminatory AI outcomes [8].

Using Censinet RiskOps™ for De-Identification and Risk Management

Healthcare organizations face the dual challenge of implementing NIST-compliant de-identification processes while navigating the complexities of AI governance. Censinet RiskOps™ tackles this by centralizing risk management workflows, ensuring organizations maintain control over de-identified data throughout the AI lifecycle. This approach not only simplifies de-identification but also lays the groundwork for effective AI governance.

Automating De-Identification Workflows

Censinet RiskOps™ removes the need for manual processing by automating de-identification tasks through APIs that integrate seamlessly with major cloud platforms and EHR systems. For instance, a mid-sized healthcare provider used the platform to automate the de-identification of 50,000 scans. By applying NIST-compliant methods with k-anonymity (k=10), they cut deployment time from six weeks to just two days and achieved a 99.5% reduction in re-identification risk, as outlined in NIST SP 800-188.

In another case, organizations managing AI training data for predictive analytics reported a 70% decrease in manual review time while staying HIPAA-compliant. The platform applies real-time transformations such as tokenization, generalization, and suppression to anonymize all 18 HIPAA identifiers. For example, when processing 1 million patient records for AI fraud detection models, the system maintained the data’s utility for machine learning while logging detailed audit trails to ensure compliance.

Supporting AI Governance and Compliance

Beyond automation, Censinet RiskOps™ enhances AI governance and compliance efforts. Its assessment module, aligned with the NIST AI RMF 1.0, helps organizations identify and address risks tied to AI adoption, including data privacy and security vulnerabilities. By using standardized questionnaires tailored to the NIST AI RMF, the platform evaluates AI-related controls and procedures effectively.

When risks are uncovered, the system generates automated action plans with clear recommendations to address compliance gaps and align with NIST standards. These findings feed into the centralized Censinet Risk Register, fostering collaboration among governance, risk, and compliance teams. Healthcare organizations leveraging these pre-built templates have seen regulatory approvals for AI projects accelerate by 40%, thanks to streamlined documentation of de-identification processes and risk management strategies.

Conclusion

NIST-compliant de-identification plays a key role in balancing patient privacy with the advancement of AI in healthcare. By adhering to NIST guidelines, organizations can rigorously transform sensitive patient data while preserving its clinical value for training accurate AI models. This approach ensures that data remains both secure and useful.

Regulatory bodies are increasingly aligning with NIST standards, further solidifying their importance. Agencies like the FDA, FTC, and OCR reference these standards in their enforcement guidelines [9]. For healthcare organizations, adopting NIST-aligned de-identification processes not only meets HIPAA requirements but also supports cutting-edge AI projects, such as predictive analytics and treatment optimization.

Real-world examples show that NIST-guided de-identification enables the development of large-scale AI solutions without sacrificing privacy.

Additionally, the four key functions of the NIST AI Risk Management Framework - Govern, Map, Measure, and Manage - offer healthcare organizations a clear roadmap for addressing enterprise risks and implementing effective controls [9]. By inventorying AI systems, using the NIST AI RMF Playbook, and continuously monitoring their processes, organizations can enhance compliance and minimize risks.

Ultimately, healthcare organizations must view de-identification as an ongoing governance responsibility, not a one-time task. Integrating NIST standards throughout the AI development lifecycle allows organizations to advance AI capabilities while maintaining patient trust and privacy.

FAQs

How do I choose Safe Harbor vs Expert Determination for an AI project?

Choosing between Safe Harbor and Expert Determination depends on how you plan to use the data and the level of compliance complexity you're prepared to handle.

Safe Harbor is the quicker and simpler option. It works by removing 18 specific identifiers from the dataset, which ensures compliance but reduces the level of detail in the data. This makes it a good choice when you need a straightforward solution without much concern for maintaining data granularity.

On the other hand, Expert Determination is a more tailored approach. It involves a qualified expert applying advanced techniques to maximize data utility while ensuring compliance. While this method provides more detailed and versatile datasets - ideal for tasks like AI model training or in-depth analytics - it requires more effort, oversight, and governance.

In short, go with Safe Harbor for fast and easy compliance. Opt for Expert Determination when your project demands richer, more detailed data.

What does “very small” re-identification risk mean in practice?

A "very small" re-identification risk refers to an extremely low likelihood of connecting de-identified healthcare data back to specific individuals. This level of security is generally accomplished through methods such as de-identification processes and applying statistical protections. However, while these steps significantly reduce the risk, they cannot entirely remove it.

How can we keep data useful for AI while meeting NIST and HIPAA?

Healthcare organizations aiming to balance data utility for AI with NIST and HIPAA compliance should focus on de-identification and pseudonymization. By removing or masking HIPAA's 18 identifiers, patient privacy is protected. Pseudonymization offers an additional layer by replacing identifiers with codes, which allows for AI training while enabling re-identification when necessary.

To further secure data, organizations should implement strong encryption, enforce strict access controls, and conduct privacy impact assessments. These measures help maintain compliance and ensure that AI development can proceed securely and responsibly.