FDA, FTC, and Beyond: Multi-Agency Compliance for Healthcare AI

Post Summary

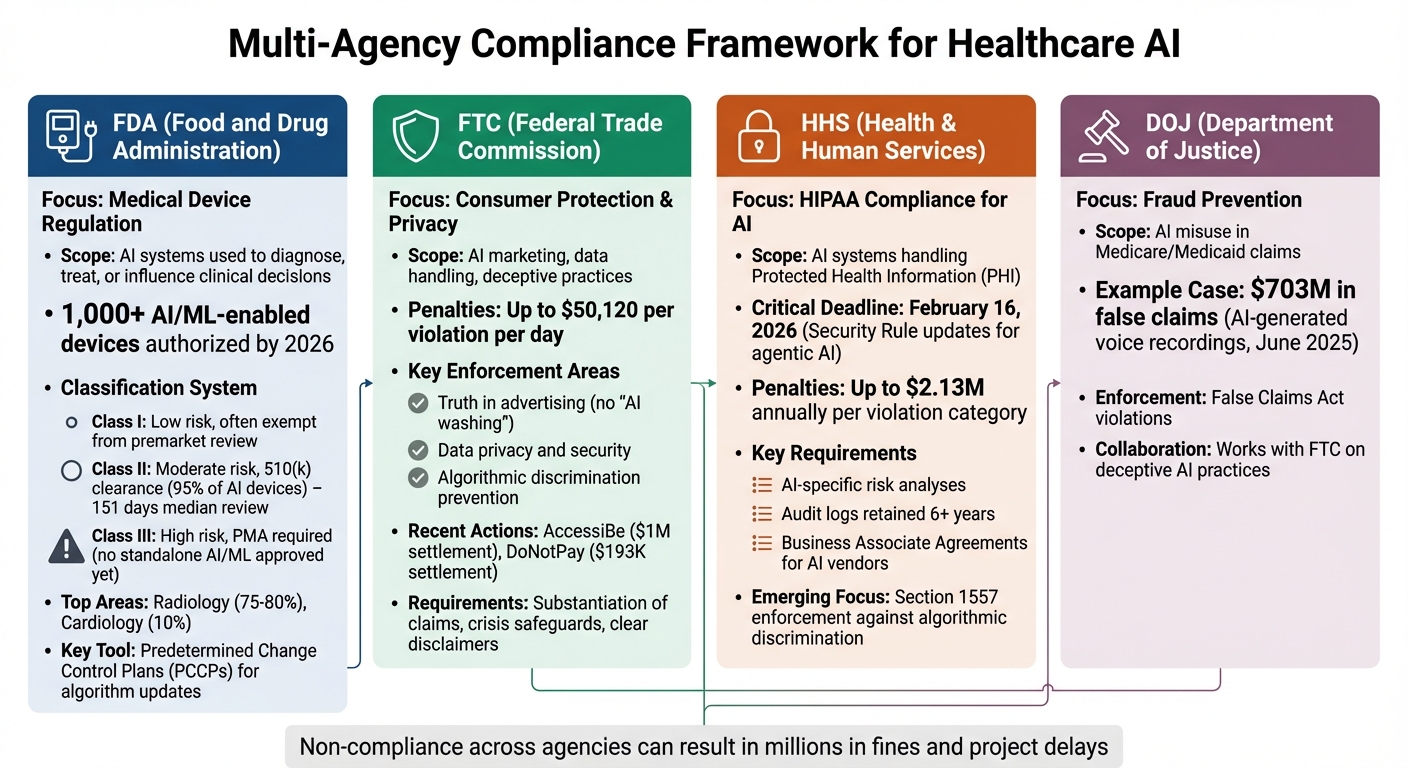

Artificial intelligence (AI) in healthcare is growing fast, but staying compliant with multiple agencies is a challenge. The FDA, FTC, HHS, and DOJ each enforce different rules for AI tools, from medical device approvals to privacy and fraud prevention. By 2026, over 1,000 FDA-approved AI medical devices exist, but organizations also face risks like deceptive advertising, bias in algorithms, and misuse of patient data.

Key Takeaways:

- FDA: Oversees AI as medical devices with risk-based classifications and postmarket monitoring.

- FTC: Regulates truthful advertising, data privacy, and prevention of deceptive practices.

- HHS: Enforces HIPAA for AI systems handling patient data, with updated rules for AI by February 2026.

- DOJ: Investigates fraud, such as AI misuse in Medicare claims, with fines reaching millions.

To simplify compliance, tools like Censinet RiskOps™ centralize risk management, cutting manual tasks by 70% and reducing errors across agencies. With AI's rapid adoption, staying ahead of evolving regulations is critical for healthcare providers and developers.

Multi-Agency AI Healthcare Compliance: FDA, FTC, HHS, and DOJ Requirements

AI-Enabled Medical Devices: New FDA Draft Guidance and Cybersecurity Insights

sbb-itb-535baee

FDA Regulation of AI-Enabled Medical Devices

The FDA classifies AI systems as medical devices when they are used to diagnose, treat, or influence clinical decisions. By early 2026, the FDA had authorized more than 1,000 AI/ML-enabled devices, with a record 258 to 295 cleared in 2025 alone [4][6]. The regulatory process depends on the level of risk the device poses to patients.

AI/ML Action Plan and Risk-Based Classification

The FDA uses a three-tier system to classify medical devices based on risk:

- Class I devices: These pose low risk and are often exempt from premarket review. Examples include basic wellness apps or specimen-handling tools.

- Class II devices: These carry moderate risk and require either 510(k) clearance (showing they are similar to an approved product) or De Novo classification (for devices without an existing equivalent).

- Class III devices: These represent the highest risk level and require Premarket Approval (PMA), a rigorous review process reserved for life-sustaining devices [4][6].

Most FDA-authorized AI/ML SaMD (Software as a Medical Device) fall under Class II. In 2024, all 168 AI/ML-enabled devices cleared by the FDA were designated as Class II [4][7]. To date, no standalone AI/ML SaMD has been approved through the Class III PMA pathway [4][6]. About 95% of AI/ML devices gain authorization via the 510(k) pathway, while around 5% use the De Novo pathway. Median review times are 151 days for 510(k) submissions and 372 days for De Novo requests [4].

Radiology leads the AI medical device sector, accounting for about 75–80% of all FDA-authorized AI/ML devices, with cardiology representing roughly 10% [6]. A milestone in this field occurred in 2018 when IDx-DR (Digital Diagnostics) became the first FDA-authorized autonomous AI diagnostic system. It received De Novo authorization as a Class II device to detect diabetic retinopathy without needing a clinician's input [4][6]. In 2024, Viz.ai received 510(k) clearance for Viz ICH Plus, an AI tool that identifies and measures intracerebral hemorrhage on CT scans [4]. That same year, Prenosis earned De Novo marketing authorization for Sepsis ImmunoScore, an AI diagnostic tool that predicts sepsis risk by integrating lab data, vital signs, and patient demographics [4].

This classification system lays the groundwork for adaptive postmarket controls.

Predetermined Change Control Plans and Postmarket Monitoring

AI systems differ from traditional medical devices because they evolve over time. Algorithms can be retrained, performance thresholds adjusted, and models may drift as clinical data changes. To address these challenges, the FDA finalized its guidance on Predetermined Change Control Plans (PCCPs) in December 2024, extending its scope to all AI-enabled functions [5][6].

A PCCP allows manufacturers to make pre-approved changes - such as retraining models or adjusting thresholds - without needing to submit a new 510(k) or PMA application. The framework includes three key components:

- Description of Modifications: Outlines what changes will be made.

- Modification Protocol: Details the methods for development and validation.

- Impact Assessment: Evaluates risks to safety and effectiveness [6][9].

"The PCCP framework does not eliminate regulatory oversight -- it addresses oversight earlier." – MedDeviceGuide [6]

Postmarket monitoring plays a critical role in identifying issues like performance degradation or data drift, where a model's accuracy declines due to changing clinical patterns or input data [6]. The FDA requires manufacturers to include roll-back plans in PCCPs, enabling devices to revert to a previous version if modifications fail in real-world settings [5][8]. Additionally, companies must maintain version control, clearly labeling each PCCP's title and version. New versions or models must also include updated Unique Device Identifiers (UDIs) [5].

These stringent protocols set FDA-regulated AI devices apart from other AI-driven tools that benefit from certain exemptions.

Exemptions Under the 21st Century Cures Act

Not all AI-driven tools in healthcare are subject to strict FDA oversight, thanks to exemptions under the 21st Century Cures Act. The Act excludes certain software categories from the definition of a "medical device", meaning they do not require FDA premarket clearance or registration [10].

Clinical Decision Support (CDS) tools are exempt if they assist - rather than replace - clinical decision-making and allow practitioners to independently evaluate the basis for recommendations. By January 2026, the FDA expanded enforcement discretion to include single-output CDS tools (like Generative AI clinical copilots) that offer one appropriate recommendation, provided the clinician remains the decision-maker and the logic is transparent [10].

"Non-device CDS is exempt from FDA premarket clearance and registration." – Nixon Law Group [10]

General Wellness tools, such as apps for exercise, sleep, or stress management, are also exempt if they are low-risk, non-invasive, and do not claim to diagnose or treat specific conditions. Similarly, Administrative and Health IT software used for tasks like billing, scheduling, or managing electronic health records generally falls outside FDA regulation [10]. Developers of wellness products must carefully avoid language that implies clinical use or disease management, as even subtle changes in labeling or intended use could reclassify a product as a regulated device [10].

FTC Oversight: Consumer Protection and AI in Healthcare

The Federal Trade Commission (FTC) plays a critical role in consumer protection, particularly when it comes to AI tools that engage directly with the public. While the FDA oversees AI classified as medical devices, the FTC enforces Section 5 of the FTC Act to address unfair or deceptive practices in AI marketing, data handling, and deployment [2]. This includes monitoring advertising claims, safeguarding consumer privacy, and preventing algorithmic discrimination.

The FTC takes a two-pronged approach to enforcement. It targets misleading claims and unfair practices while remaining mindful of the balance between regulation and technological progress [11]. Companies that violate Section 5 face heavy penalties, with fines reaching $50,120 per violation per day. Violations involving the Children's Online Privacy Protection Act (COPPA) carry even steeper penalties of up to $51,744 per violation [2]. This consumer protection mandate naturally extends to scrutinizing advertising claims, as explored in the next section.

Truth in Advertising and Deceptive AI Claims

The FTC defines AI broadly, covering any machine-based system that makes predictions, recommendations, or decisions - or even products marketed as having AI capabilities [13]. This wide definition enables the agency to combat "AI washing", where companies exaggerate or falsely claim their products use AI [13].

Every AI-related claim must be truthful, backed by evidence, and presented clearly [11]. Healthcare AI companies face extra scrutiny for advertising claims about neutrality, unbiased performance, or exaggerated accuracy and safety [11][12]. The FTC is particularly vigilant about AI tools - such as "therapy bots" - that claim to treat conditions like depression or anxiety without proper oversight, licensure, or disclaimers. Additionally, the agency evaluates the overall "net impression" of an ad - the main takeaway for consumers - even if the actual wording seems accurate [13].

For example, in January 2025, AccessiBe settled for $1 million over unverified claims that its AI tool could make any website WCAG/ADA compliant within 48 hours [13]. Similarly, DoNotPay paid $193,000 after marketing itself as the "world's first robot lawyer", despite insufficient testing or legal validation of its outputs [13].

The FTC expects companies to substantiate AI performance claims through internal testing, documentation, or third-party validation before launching any marketing efforts [11][13]. If a claim cannot be fully supported, companies must include clear disclaimers about the technology's limitations, such as noting that it "occasionally generates false information." In healthcare, the FTC also requires crisis safeguards - like redirecting distressed users to human professionals - and disclaimers stating the tool is not a licensed medical service [11].

Developers can also be held accountable if their AI tools enable deceptive practices [2]. In September 2024, the FTC charged Rytr, an AI writing assistant, for helping users generate thousands of fake consumer reviews. The resulting settlement barred the company from promoting services designed for creating such reviews [15]. Beyond false advertising, the FTC is equally focused on data privacy issues.

Data Privacy and Security for AI-Driven Health Platforms

The FTC enforces strict privacy and security standards for AI systems handling sensitive patient data. Instead of pre-regulating AI technology, the agency focuses on outcomes, such as preventing algorithmic bias, deceptive practices, and privacy breaches [2]. Companies must ensure that data used for AI training is collected with proper consent and used solely for disclosed purposes, following the principle of purpose limitation [2]. The FTC can also mandate the deletion of data or models derived from improperly obtained sources [2].

Healthcare organizations are required to document their data sources and restrict data usage to the purposes disclosed at the time of collection. They must also offer consumers clear avenues to challenge or appeal adverse decisions made by automated systems, especially when these decisions impact access to care, treatment options, or insurance coverage [2]. Regular bias audits are vital to identifying and addressing potential algorithmic discrimination in high-risk healthcare scenarios.

For organizations using third-party AI vendors, thorough due diligence is essential. Contracts must clearly allocate liability to ensure compliance with FTC privacy and security standards [2]. The FTC's Bureau of Consumer Protection, supported by the Office of Technology Research and Investigation, actively investigates violations involving AI [2]. As of 2025, Mark Gray serves as the FTC's Chief AI Officer, overseeing internal AI initiatives and strategic adoption [14].

Compliance Across Additional Agencies and Emerging Regulations

Healthcare organizations using AI must navigate a maze of federal and state regulations that go beyond the FDA and FTC. Agencies like HHS, DOJ, and CMS enforce their own rules, creating overlapping requirements. Additionally, state laws are adding another layer of oversight, often supplementing federal mandates. This fragmented framework means a single AI tool might need to meet multiple, sometimes conflicting, standards [1][20].

HHS and HIPAA Integration with AI

HHS plays a critical role in regulating how AI systems handle Protected Health Information (PHI). Through its Office for Civil Rights (OCR), HHS enforces HIPAA compliance via the Privacy, Security, and Breach Notification Rules. These rules ensure that AI systems meet strict standards for PHI management [7]. For instance, a compliance deadline of February 16, 2026, requires updates to the HIPAA Security Rule to address "agentic AI" - systems with autonomous decision-making capabilities. Non-compliance could lead to annual penalties of up to $2.13 million per violation category [7][18].

Organizations must conduct AI-specific risk analyses, addressing threats like AI hallucinations, training data leaks, and prompt injection attacks [18]. HIPAA also mandates detailed logging of all PHI exchanges, with audit records retained for at least six years [7].

Joe Braidwood, CEO of GLACIS, emphasizes the operational nature of HIPAA compliance:

"There is no 'HIPAA certified AI.' HIPAA compliance is not a product attribute - it's an operational state that depends on how AI is deployed, configured, documented, and monitored" [7].

"The question isn't whether your AI vendor has policies. It's whether you can prove those policies executed when the plaintiff's attorney asks for evidence during discovery." – GLACIS Compliance Guide [7]

AI tools that replace clinical judgment may also fall under FDA regulation as medical devices, while their data handling remains under HHS/OCR oversight [16]. The DOJ and FTC come into play when AI leads to fraudulent claims under the False Claims Act (FCA). For example, in June 2025, the DOJ prosecuted a case involving AI-generated voice recordings of Medicare beneficiaries, resulting in $703 million in false claims [3][17].

Legal risks aren't limited to fraud. In November 2025, Sharp HealthCare faced a proposed class action lawsuit alleging that its AI scribe system recorded over 100,000 patients without proper consent. This highlights the importance of ensuring Business Associate Agreements (BAAs) explicitly cover AI services. For example, OpenAI only offers BAAs for its Enterprise tier, not for standard API or consumer versions [7].

Emerging Federal Legislation and Bias Audits

While federal AI legislation like the proposed America AI Act remains stalled, states are enacting their own laws to regulate AI in healthcare. In 2024 alone, nine states passed health-related AI laws, and by March 2026, the FDA had authorized 1,430 AI-enabled devices [19]. Colorado's SB 24-205, effective June 30, 2026, requires impact assessments for "high-risk" AI to address algorithmic discrimination [19]. Similarly, California's AB 489, effective January 1, 2026, mandates that healthcare facilities disclose when AI is involved in patient care [18].

HHS OCR is leveraging Section 1557 of the Affordable Care Act to address algorithmic discrimination in clinical tools used for patient screening and risk stratification [19]. Meanwhile, the ONC's HTI-1 rule requires transparency for predictive decision support tools, detailing their development and functionality [19]. CMS has also stepped in, prohibiting Medicare Advantage plans from using algorithms as a blanket substitute for human review in coverage decisions [19].

At the federal level, a December 2025 Executive Order, "Ensuring a National Policy Framework for Artificial Intelligence", aims to streamline regulations, potentially clashing with stricter state laws [3][17]. Federal agencies are also adopting AI for enforcement purposes. Starting January 1, 2026, the CMS Innovation Center will implement the WISeR Model in six states, using AI to automate prior authorization for certain outpatient services and reduce fraud [3][17].

To keep up with evolving regulations, healthcare organizations need to adapt their compliance processes. This includes conducting annual bias audits for high-risk systems to assess their impact on protected groups like race, age, or disability [19]. Organizations must also implement systems to notify patients when AI significantly influences decisions about their care and provide a clear path for patients to challenge those decisions through human review [19]. Aligning with the NIST AI Risk Management Framework (AI RMF) can also offer legal protection under certain state laws [19][20].

Streamlining Multi-Agency Compliance with Censinet RiskOps™

Navigating compliance across multiple federal agencies like the FDA, FTC, and HHS can be a daunting task for healthcare organizations deploying AI. Each agency has its own unique set of documentation requirements, timelines, and oversight protocols. Censinet RiskOps™ simplifies this complexity by consolidating AI risk management into one centralized platform. The platform automates risk assessments, tracks compliance tasks, and aligns operations with changing regulatory standards. By unifying these diverse requirements, it not only makes compliance more manageable but also strengthens the focus on patient safety and data security. This streamlined process ensures organizations are better prepared for future risk assessments and governance needs.

Automating AI Risk Assessments

Censinet RiskOps™ significantly reduces the time spent on manual reviews - up to 70% - by using automated workflows to validate evidence [21][22]. Users can upload AI model data, which the platform cross-references with FDA and FTC requirements. It identifies gaps, such as missing postmarket monitoring data, and suggests remediation steps tailored to agency guidelines [21][23].

The platform also supports Predetermined Change Control Plans by providing detailed checklists and generates policy drafts aligned with HIPAA and bias audit standards. This ensures clear traceability and consistent compliance [21][22]. For example, when validating a diagnostic AI tool, the system benchmarks it against 21st Century Cures Act exemptions to check for regulatory relief eligibility. It also tracks tasks, sets deadlines, and monitors progress via intuitive dashboards [21].

Unified AI Governance and Oversight Dashboards

Censinet RiskOps™ brings all AI-related risks, tasks, and workflows into one dashboard, encouraging collaboration across departments. Legal teams can evaluate FTC advertising claims while clinicians focus on FDA device safety - all within the same platform [21][22]. Features like role-based access, real-time updates, and metrics such as compliance completion rates make this process seamless.

This centralized approach eliminates silos and improves response times to regulatory updates by 50% [21][22]. For instance, a hospital team working on HIPAA-AI integration receives automated alerts about FTC privacy updates, ensuring compliance across all fronts. Tools like comment threads on specific risk items further enhance teamwork without requiring multiple systems [21]. These integrated features also strengthen the platform’s benchmarking capabilities.

Benchmarking Against Regulatory Standards

Censinet RiskOps™ allows organizations to use benchmarking templates tailored to specific regulations - like FDA risk categories or FTC consumer protection metrics. By uploading AI performance data, users receive detailed gap analyses and compliance scores [21][23]. For example, benchmarking a predictive analytics tool might yield an 85% FDA compliance score while highlighting FTC data security gaps, enabling timely updates to policies.

The platform compares organizational performance against industry standards and offers actionable recommendations, such as improving bias audits in response to new laws. With modular templates and a constantly updated standards library, the system adapts to emerging regulations. For example, it includes automatic bias audit checklists for proposed legislation [21][22]. When HHS updates its guidance, the platform re-scores existing AI tools overnight, helping organizations stay aligned with evolving requirements.

Conclusion

Healthcare organizations using AI must navigate a maze of regulatory requirements involving multiple agencies. The FDA regulates AI-enabled medical devices through risk-based classifications and Predetermined Change Control Plans. The FTC enforces rules to ensure truthful advertising and protect consumer privacy, while the HHS focuses on HIPAA compliance for AI systems handling protected health information. Additionally, new federal laws are introducing mandatory bias audits to address disparities in diagnostics. Non-compliance can be costly - HHS experts caution that HIPAA violations alone can result in fines exceeding $50,000 per infraction, and FDA recalls are another potential consequence [21][22]. A Deloitte report from 2025 revealed that 25% of healthcare AI projects faced delays due to the complexities of multi-agency compliance [21][22].

Fragmented systems for tracking FDA postmarket data, FTC advertising claims, privacy compliance, and bias audits only add to the risk of enforcement actions. This disjointed approach increases the chance of oversight, as seen in FTC settlements with health apps that overstated their AI diagnostic capabilities [22].

Censinet RiskOps™ offers a solution by unifying multi-agency compliance efforts into a single platform. It automates risk assessments across FDA classifications, FTC privacy regulations, and HIPAA standards, cutting manual audit time by up to 70% [23]. Reports indicate a 50% drop in non-compliance incidents, thanks to proactive governance tools like role-based access for compliance teams [21]. For instance, a mid-sized hospital chain used the platform to align 15 AI imaging tools, addressing potential FTC advertising risks and avoiding hefty fines [22]. By consolidating compliance processes, this approach not only protects patients but also strengthens operational readiness in the face of evolving regulations.

FAQs

How do I know if my healthcare AI is an FDA-regulated medical device or an exempt CDS tool?

To figure out whether your healthcare AI falls under FDA regulation or qualifies as an exempt Clinical Decision Support (CDS) tool, start by examining its intended use and functionality. If the AI is designed to diagnose, treat, or prevent diseases, it’s likely considered a regulated medical device. On the other hand, tools that focus on general wellness or provide non-specific suggestions - without directly influencing clinical decisions - might be exempt. Always refer to FDA guidance to ensure your AI’s classification and compliance are accurate.

What evidence do I need to back up AI marketing claims to avoid FTC enforcement?

To steer clear of FTC enforcement, make sure your AI marketing statements are honest, realistic, and backed by solid evidence. This means clearly showcasing what your AI can do, acknowledging its risks and limitations, and being upfront about potential biases or areas where it might fall short. By grounding your claims in facts, you not only stay compliant but also strengthen trust with your audience.

What HIPAA steps should we take before using AI with PHI (including vendor BAAs)?

Before incorporating AI tools with Protected Health Information (PHI), it's critical to ensure compliance with privacy and security regulations. Here's how you can do that:

- Establish Business Associate Agreements (BAAs): These agreements outline how data can be used, assign liability, and dictate breach reporting protocols.

- Conduct Vendor Risk Assessments: Evaluate vendors for strong security measures, such as encryption, access controls, and other safeguards.

- Verify Certifications: Look for certifications like SOC 2 or HITRUST to confirm the vendor meets industry security standards.

Additionally, include provisions for continuous monitoring, a clear incident response plan, and adherence to HIPAA requirements. These steps help minimize risks and safeguard patient information effectively.